AI is no longer just about models. It's about where those models run. For physical operations like factories, energy grids, and traffic systems, the cloud alone isn't enough. AI needs to run closer to the data that drives decisions, and a recent session with Khasm Labs and Archetype AI surfaced what that looks like in production, drawing on a live deployment with the City of Bellevue.

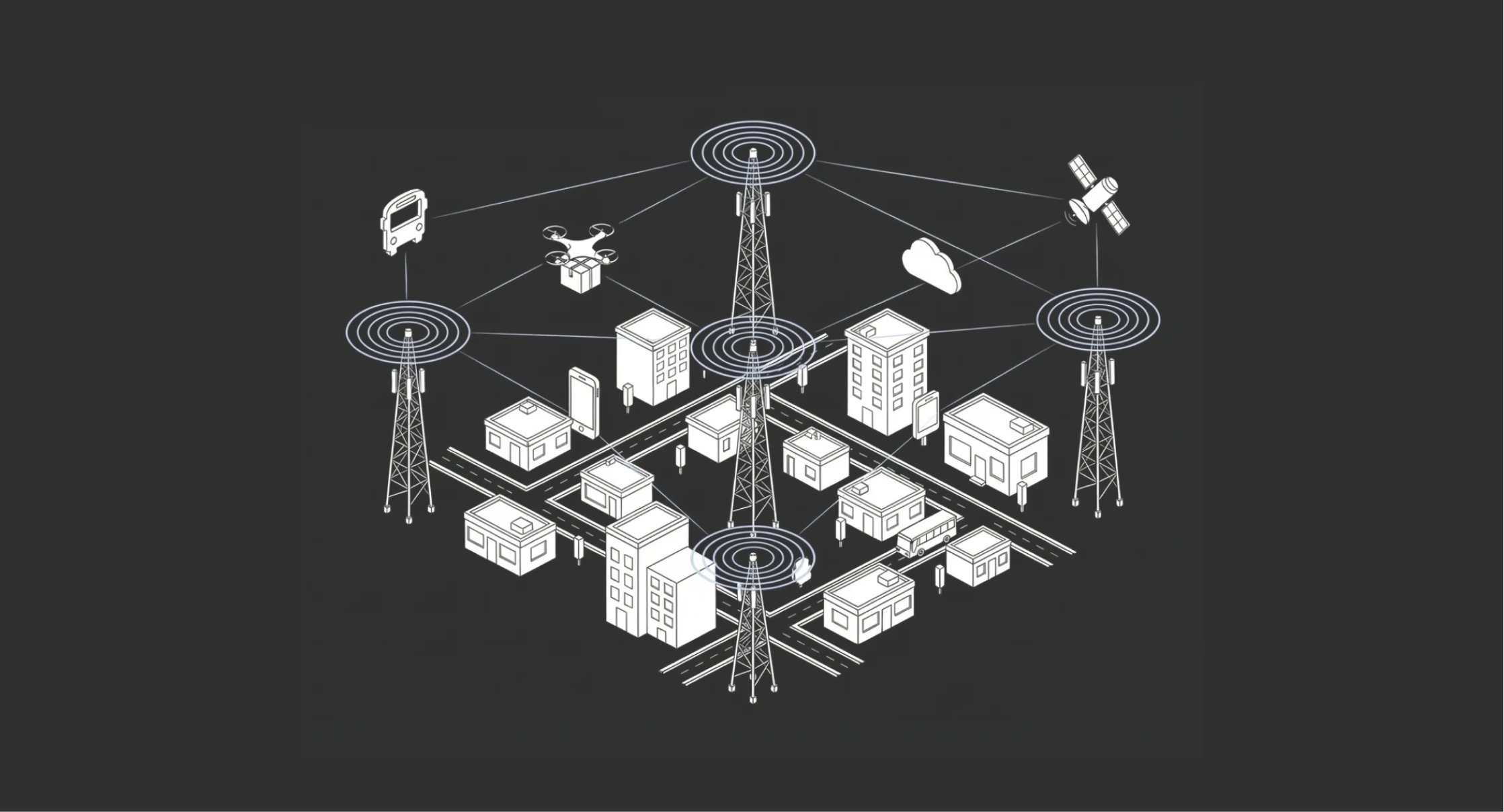

What Does "AI at the Edge" Actually Mean?

Edge AI means running AI models near the source of data rather than in a centralized cloud. In practice, this is a spectrum:

- On-device: inference directly on a machine or sensor, with no external dependencies

- On-prem edge server: a local server processing data from multiple machines inside a facility

- Telco edge: distributed edge data centers inside the carrier network, close to operations

- Radio network: AI inference running on cell towers and base stations

As Sisinio Baldis, Head of Solutions Engineering at Archetype, describes it, the edge is like an onion, with the device at the center and the factory floor, the telco edge, the data center, and the cloud as concentric layers outward. Where you run inference depends on what the use case demands.

Why Edge AI Is Becoming Critical

The shift isn't a preference. It's driven by real constraints in physical environments:

- Latency. In safety-critical systems, even tens of milliseconds can cause missed actions, and the window to act is often shorter than a cloud round-trip.

- Connectivity. Factories, remote sites, and hospitals can't rely on constant internet, and edge AI keeps systems working when links drop.

- Data security. Sensitive operational data and IP often can't leave the environment for regulatory or competitive reasons.

- Cost of moving data. For high-volume modalities like video, the transport bill alone can run into hundreds of thousands of dollars annually before a single inference is performed.

The Hidden Problem: Data Gravity

The biggest reason edge AI is growing is data gravity. The City of Bellevue has 220 intersections with cameras feeding Archetype's models, generating 16 to 18 petabytes of data per year. Moving that to any cloud just to run inference would cost $850,000 to $1 million annually, with no action taken on the data whatsoever. As Jim Brisimitzis, founder of Khasm Labs, puts it: physics is physics. You can have the best fiber on the planet, but moving petabytes still takes time. The smarter approach is to process data where it's created and send only the compressed intelligence events upstream.

Real-World Example: Smart City Safety Systems

A practical example comes from a deployment with the City of Bellevue.

Using edge AI, teams monitored pedestrian safety across intersections in real time.

The system combined:

- Live video feeds

- Traffic signal data

- Real-time AI inference

This allowed the system to:

- Detect unsafe crossing situations

- Extend walk signals automatically

- Delay traffic to prevent accidents

- Provide real-time situational awareness

Instead of just analyzing incidents after they happen, the system actively prevented them.

Why Latency Matters More Than You Think

In testing the deployment, the team measured what happens when you add round-trip latency to the inference loop. Adding just tens of milliseconds produced a 15% drop in performance, because the pipeline involves multiple hops and small increments compound.

Running inference inside the carrier network achieved roughly 5 milliseconds of round-trip latency, close enough to make a decision before the pedestrian leaves the curb. By comparison, the lidar systems Bellevue evaluated would have required dedicated compute at $30,000 to $50,000 per intersection, which doesn't scale across 220 sites.

The “Onion Model” of Edge AI

A useful way to think about edge AI is in concentric layers, each representing a different tradeoff between speed, cost, control, and scalability:

- Device-level inference, right on the sensor or machine

- On-site systems, inside the facility

- Local edge infrastructure, serving a cluster of sites

- Telco edge, distributed inside the carrier network

- Cloud, for training and lifecycle management

You don't choose one layer for the whole system. You decide what runs where based on the decision being made. Newton is built to operate across this entire continuum: the 7-billion-parameter base model runs on cloud and on-prem GPUs, and through distillation can be compressed to 1 billion or under 100 million parameters for edge hardware.

Physical AI + Edge AI: A Powerful Combination

Edge AI is far more valuable paired with a physical AI foundation model. Newton is trained on approximately 600 million real-world sensor measurements, fully self-supervised, and generalizes across sensor types and operating conditions, which is what makes edge deployment practical, since you're not shipping a brittle, narrow model.

A traditional vision model might tell you a person is in a frame; a foundation model can reason about whether that person is walking too slowly to clear the crosswalk. Built on the Archetype Platform, a full-stack Physical AI platform, agents can reason about situations, not just detect objects.

Common Edge AI Use Cases

Across industries, the pattern is consistent (high data volume, real-time decisions, critical operations) and they map to Archetype's three solution packages: continuous process monitoring, task verification in discrete operations, and safety. The common deployments include:

- Smart cities and airports: traffic monitoring, pedestrian safety, incident detection, crowd analysis

- Manufacturing: process monitoring, predictive maintenance, quality control, including distribution centers with maintenance windows of just four hours a day

- Energy and utilities: grid management, substation monitoring, vegetation management, increasingly critical now that EV charging has made historical load patterns unreliable

- Logistics and fleets: asset tracking, maintenance optimization, HVAC and route efficiency

The Shift from Cloud Thinking to Edge Thinking

Cloud AI optimizes for capability: effectively unlimited compute, centralized data, abstracted infrastructure. Edge AI optimizes for constraints: hardware limits, network reliability, and distributed state. The biggest assumption to discard is that you'll always know where your compute is and what's on it. At the edge, placement is the point. You need to know exactly what hardware your model runs on, what happens when the link drops, and how you'll update it later.

Key Challenges When Deploying Edge AI

Teams underestimate how many things change at the edge:

- Hardware awareness: knowing exactly what compute your model runs on

- Connectivity failures: systems must keep working when links drop

- Data filtering: only meaningful events go upstream, not raw streams

- Observability: monitoring is harder in distributed systems

- System updates: refreshing models without taking the operation offline

The approach Khasm uses in Bellevue is microservices-based. Each component can be cycled independently, models can be swapped during low-traffic windows, and the overall system stays available throughout. Complexity increases, but so do the economic benefits, particularly when one inference engine can aggregate signal across many edge locations rather than requiring dedicated compute at each.

How to Approach Edge AI Projects

Start with the problem, not the infrastructure. Khasm and Archetype opened the Bellevue conversation with the city's most pressing operational problem (pedestrian safety, backed by a DOT grant) and have since extended the same architecture to accident detection and E-911 dispatch, with utility substation monitoring and manufacturing now mapping into the same approach.

The practical filter is simple: clear financial impact, sensor data already in place, and latency measured in units that matter to operations.

Cloud vs Edge: It Is Not Either Or

Edge isn't a replacement for the cloud; they're complementary. Edge handles real-time inference and immediate action, while the cloud handles training, fine-tuning, analytics, and lifecycle management.

Edge inference produces compressed events that flow to the cloud for retraining, and updated models flow back through standard deployment pipelines. Newton supports this loop natively, with deployment flexibility across hyperscaler cloud (AWS, Azure, GCP), private VPC, on-premises, and edge.

Final Thoughts

AI is moving out of the cloud and into the real world, and the unlock isn't better models. It's placing intelligence where it can act immediately. The companies that figure out the edge layer of their AI architecture now will own the operational data, the deployment patterns, and the muscle to scale into use cases their competitors can't yet see.

About This Webinar

This post is based on insights shared during “Physical AI at the Edge: How Local, Real-Time AI is Powering Physical Operations”, featuring Aristo Chang, Jim Brisimitzis, Steve Broughton, and Sisinio Baldis.

To explore the Archetype AI platform and edge deployments, visit Archetype AI or connect on LinkedIn and X (@PhysicalAI).

FAQ: Edge AI and Physical AI

What is edge AI?

Edge AI is the practice of running AI models near the source of data instead of relying entirely on cloud infrastructure.

Why is edge AI important?

It enables faster decisions, reduces latency, improves reliability, and lowers data transfer costs.

What is data gravity?

Data gravity refers to the tendency of large datasets to be expensive and slow to move, making it more efficient to process them where they are generated.

Can edge AI replace cloud AI?

No. Edge AI handles real-time processing, while cloud AI handles training and large-scale analytics.

What industries benefit most from edge AI?

Manufacturing, energy, logistics, smart cities, and any environment with real-time physical operations.

How do you start with edge AI?

Start with a high-impact use case where real-time decisions matter and data volume is high.

.jpg)